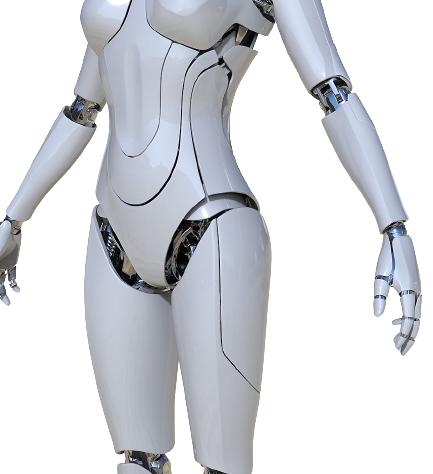

The world of “Apply butter presentations”, favoritism and make believe things are gone with the wind. A new generation of leaders are setting the world spin the other way and they are doing it. A two paragraph on “What executives and leaderships” are looking for. ~ Un imaginable world fused with AI has started spinning. Read on. **CLICK THE IMAGE** or SCROLL DOWN FOR OTHER ARTICLES.